World’s First Safe AI-Native Browser

AI should work for you, not the other way around. Yet most AI tools still make you do the work first—explaining context, rewriting prompts, and starting over again and again.

Norton Neo is different. It is the world’s first safe AI-native browser, built to understand what you’re doing as you browse, search, and work—so you don’t lose value to endless prompting. You can prompt Neo when you want, but you don’t have to over-explain—Neo already has the context.

Why Neo is different

Context-aware AI that reduces prompting

Privacy and security built into the browser

Configurable memory — you control what’s remembered

As AI gets more powerful, Neo is built to make it useful, trustworthy, and friction-light.

Beginners in AI

Good morning and thank you for joining us again!

Welcome to this daily edition of Beginners in AI, where we explore the latest trends, tools, and news in the world of AI and the tech that surrounds it. Like all editions, this is human curated and edited, and published with the intention of making AI news and technology more accessible to everyone.

THE FRONT PAGE

ChatGPT's OpenAI Signs Pentagon Deal Hours After Anthropic Gets Banned From the Government

TLDR: OpenAI signed a deal to put its AI on the Pentagon's classified network, just hours after President Trump banned rival Anthropic from all government work.

The Story:

Late Friday night, OpenAI CEO Sam Altman announced on X that his company struck an agreement with the Department of Defense to deploy its AI models on classified military networks. The deal came just hours after President Trump ordered every federal agency to immediately stop using Anthropic's technology. Defense Secretary Pete Hegseth also labeled Anthropic a "Supply-Chain Risk to National Security." Anthropic had refused to let the military use its AI tool Claude without their own control systems. Altman said OpenAI shares the same two safety concerns, and claims the Pentagon agreed to honor them. He also called on the government to offer the same terms to all AI companies.

Its Significance:

This affects everyone: when the government buys software from a private company, who gets to set the rules for how it's used? Anthropic said it wanted a say in how its AI was used in military settings. The Pentagon said that's not how government contracts work. The government argues that once it licenses a tool, it should be able to use it for any legal purpose. The company argues some uses are too risky for today's AI, no matter who the customer is. How this gets settled could shape the rules for every tech company that sells to the government going forward, not just in AI, but across the entire tech industry.

QUICK TAKES

The story: Punch, the baby macaque from Japan's Ichikawa City Zoo, has become an internet sensation. But alongside real clips of the adorable monkey, a wave of AI-generated fake videos is flooding social media. Many of these synthetic clips look convincing at first glance but fall apart when you look closely at things like fur texture, shadows, and body physics.

Your takeaway: This is a perfect example of how hard it's getting to tell real from fake online. If a video of a cute monkey looks too perfect or too dramatic, check the source. Look for glitchy fur, weird shadows, or backgrounds that shimmer. When in doubt, go to the original zoo or news outlets for the real thing.

The story: OpenAI teamed up with the Department of Energy's Pacific Northwest National Laboratory to test whether AI coding tools can help speed up federal environmental reviews. A new test called DraftNEPABench found that AI could cut drafting time on environmental permit documents by up to 15%, saving 1 to 5 hours per section.

Your takeaway: This is a small but real example of AI being useful in government paperwork. If environmental reviews take years less, things like energy projects and new buildings could get started faster, which affects local jobs and housing.

The story: A growing number of young people are using AI chatbots for mental health support. About 13% of American youth, over 5 million people, now turn to AI for mental health advice. Research from Stanford and Brown University shows these chatbots often fail basic therapy standards and can even make things worse by reinforcing harmful thoughts.

Your takeaway: If you or someone you know uses AI to talk through tough feelings, it's important to know these tools aren't trained therapists. They can miss warning signs and even encourage dangerous thinking. Real human help is still the safest option for serious mental health concerns.

TOOLS ON OUR RADAR

🐧 RustDesk Free and Open Source: A highly secure remote desktop software that allows you to access and control your computers from anywhere in the world without relying on expensive corporate subscriptions. (Alternative to TeamViewer)

🎓 Consensus Freemium: An artificial intelligence search engine designed specifically for scientific research that scans millions of peer reviewed papers to provide evidence based answers to your complex questions.

📈 Rose AI Freemium: A powerful data analysis platform that helps you find clean and visualize complex financial and economic data simply by typing conversational commands into a search bar.

🌐 Dora Freemium: A no code website builder that allows you to design and publish stunning three dimensional animated sites entirely in your browser without needing any technical experience.

TRENDING

OpenAI Shares Update on Its Mental Health Safety Work - OpenAI says it's improving ChatGPT's ability to spot signs of emotional distress and will soon add a "trusted contact" feature that lets adult users pick someone to be notified when they might need support. The company also announced that multiple mental health lawsuits involving ChatGPT are being combined into a single court case in California.

Wall Street's AI Story Is Full of Mixed Messages Right Now - Software stocks bounced back after weeks of AI-driven panic, but the big question remains: will AI replace workers or just help them? As Yahoo Finance puts it, each softer version of the AI disruption story could also be a "Trojan horse," and that uncertainty is making it hard for investors and workers to know what's coming.

Trump Bans Anthropic From Government, Calls Company 'Woke' - President Trump directed all federal agencies to stop using Anthropic's AI after the company refused to give the Pentagon unlimited access. Defense Secretary Hegseth labeled Anthropic a "supply chain risk.” Anthropic says it will challenge the decision in court.

Programmable Cyborg Insect Swarms Are Now Being Tested by NATO - German startup SWARM Biotactics has deployed real cockroaches fitted with tiny electronic backpacks as reconnaissance tools for NATO forces. The company says it's the only Western firm doing this at scale, and the insects can move as coordinated swarms through buildings and tunnels where regular drones can't go.

Claude Code Creator Says Software Engineering Jobs May Disappear by Year's End - Boris Cherny, the architect behind Anthropic's Claude Code tool, said on a podcast that the job title "software engineer" could be replaced by "builder" by the end of 2026. He says he hasn't written a single line of code by hand since November, though he adds the tool still needs human checking. Anthropic says it takes these labor concerns "very, very seriously."

TRY THIS PROMPT (copy and paste into Claude, ChatGPT, or Gemini)

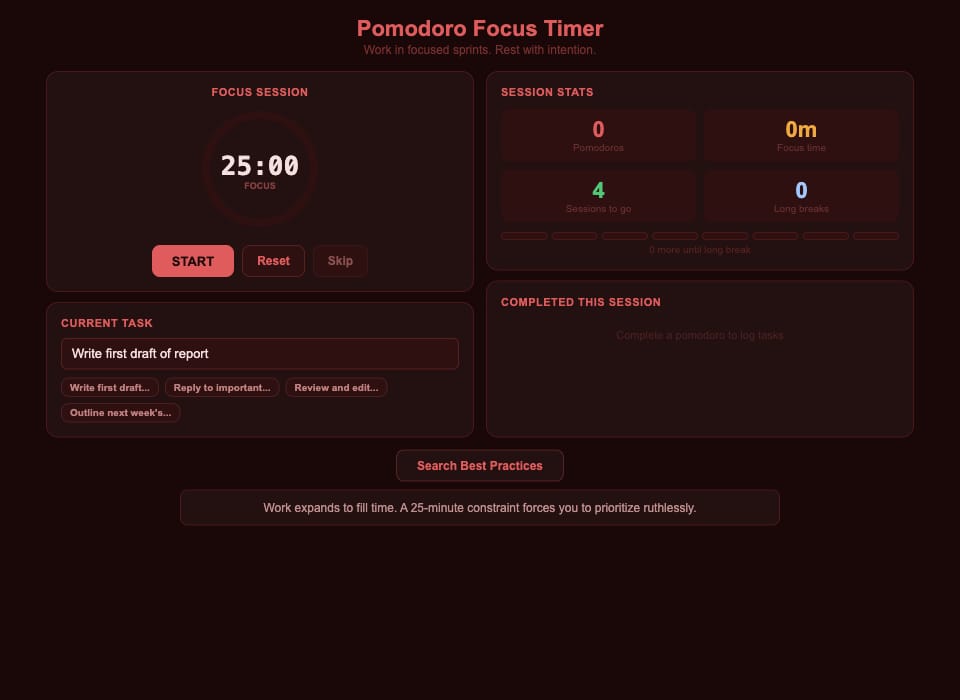

⏱️ Build a Pomodoro focus timer with a circular countdown, session tracking, and a log of tasks completed during each work sprint.

Build a Pomodoro focus timer as a single-file React app.

Requirements:

- 25-minute work sessions and 5-minute breaks with automatic switching

- Circular SVG countdown timer with an animated progre

- Start/Pause/Reset/Skip controls

- Session stats: total pomodoros, focus time, sessions until long break,

and total lon

- Task input with a log of tasks completed during the session

- Dark red color scheme (#1a0808 backgroun

- Use React 18 + Babel via CDN in one HTML file — no build tools neededWhat this does:

The classic productivity technique built into a clean timer. Start a 25-minute session, work until it rings, then take your 5-minute break. The session stats track your total focus time and count down to your next long break. The completed tasks log helps you see what you actually shipped during the day.

What this looks like:

WHERE WE STAND(based on today’s news)

✅ AI Can Now: Get deployed on classified military networks with negotiated safety guardrails (OpenAI/Pentagon deal)

❌ Still Can't: Match China's humanoid robot production speed, with Chinese companies shipping 36 times more units than U.S. rivals last year

✅ AI Can Now: Speed up government environmental permit drafting by about 15% (OpenAI/PNNL benchmark)

❌ Still Can't: Safely replace human therapists, with research showing chatbots routinely fail basic mental health standards and can reinforce harmful thinking

FROM THE WEB

RECOMMENDED LISTENING/READING/WATCHING

The AI-driven utopian town of Concordia, Sweden is on the verge of expanding to Germany when its first-ever murder and a massive security breach throw everything into chaos. It's a slick sci-fi thriller that asks what happens when the surveillance system designed to keep everyone safe can't protect anyone.

Free email without sacrificing your privacy

Gmail tracks you. Proton doesn’t. Get private email that puts your data — and your privacy — first.

Thank you for reading. We’re all beginners in something. With that in mind, your questions and feedback are always welcome and I read every single email!

-James

By the way, this is the link if you liked the content and want to share with a friend.

Some * designated product links may be affiliate or referral links. As an Amazon Associate, I earn from qualifying purchases. This helps support the newsletter at no extra cost to you and Amazon makes a tiny hair less.