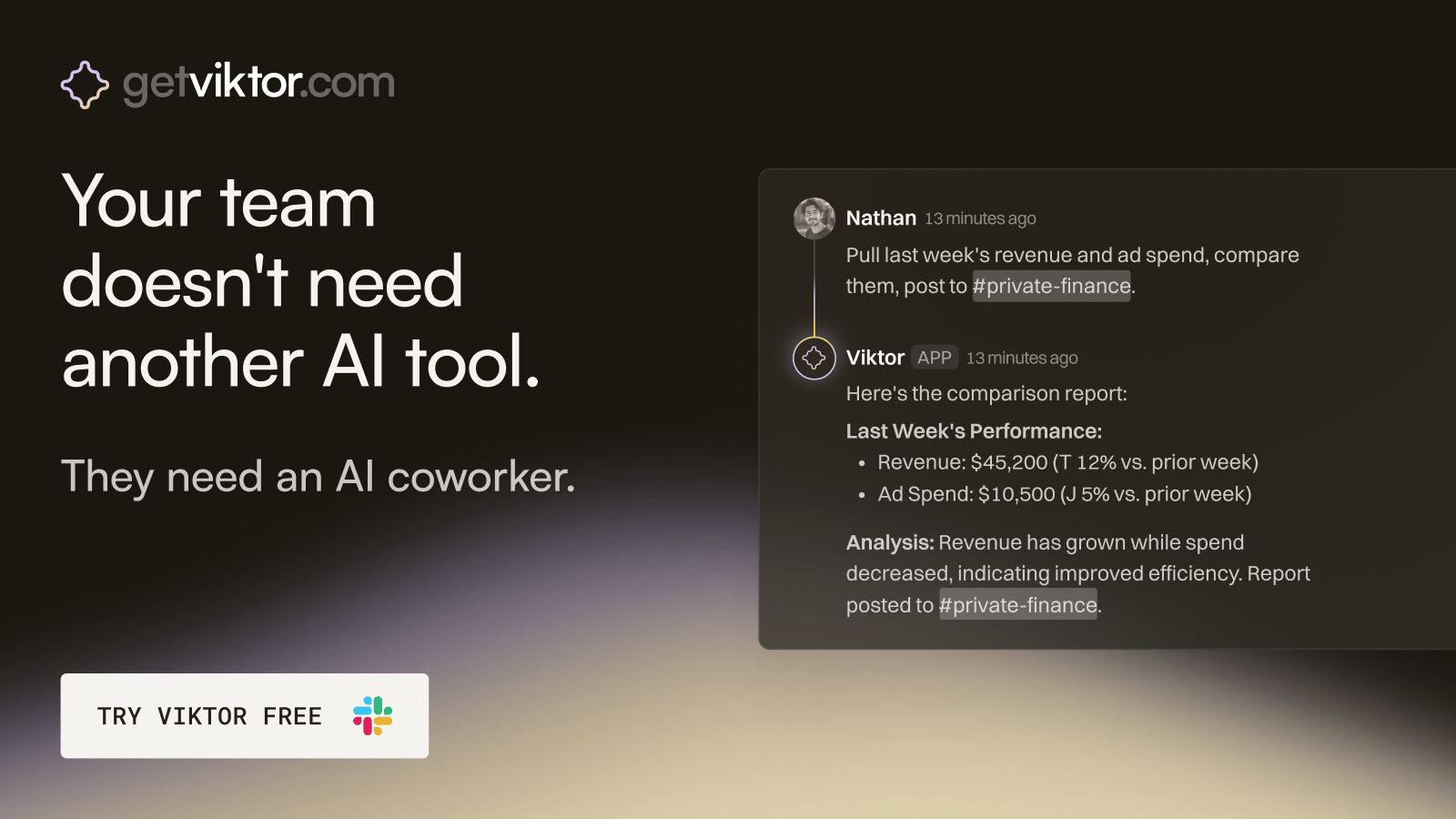

The ops hire that onboards in 30 seconds.

Viktor is an AI coworker that lives in Slack, right where your team already works.

Message Viktor like a teammate: "pull last quarter's revenue by channel," or "build a dashboard for our board meeting."

Viktor connects to your tools, does the work, and delivers the actual report, spreadsheet, or dashboard. Not a summary. The real thing.

There’s no new software to adopt and no one to train.

Most teams start with one task. Within a week, Viktor is handling half of their ops.

Beginners in AI

Good morning and thank you for joining us again!

Welcome to this daily edition of Beginners in AI, where we explore the latest trends, tools, and news in the world of AI and the tech that surrounds it. Like all editions, this is human curated and edited, and published with the intention of making AI news and technology more accessible to everyone.

THE FRONT PAGE

Anthropic AI's Most Powerful Model Ever Just Got Accidentally Leaked to the Public

TLDR: Anthropic's most powerful AI ever, called Claude Mythos, was accidentally revealed to the public after a settings mistake left nearly 3,000 internal documents wide open online.

The Story: A configuration error in Anthropic's publishing system left close to 3,000 unpublished files publicly searchable online, including a draft blog post describing a brand new AI model called Claude Mythos. Security researchers Roy Paz and Alexandre Pauwels found the exposed data first. Fortune reviewed the documents and tipped off Anthropic on Thursday, after which the company locked down public access. Anthropic confirmed the model is real and called it "a step change" and "the most capable we've built to date." The leaked draft described the model under a second internal name, "Capybara," and said it would sit in a new tier above Opus, currently Anthropic's strongest model. Compared to Claude Opus 4.6, the draft said Capybara "gets dramatically higher scores on tests of software coding, academic reasoning, and cybersecurity." Anthropic is already testing it with a small group of early access customers, but is being very careful about a wider release because of one big worry: the cybersecurity risk. The leaked documents described the model as "currently far ahead of any other AI model in cyber capabilities" and warned it could help hackers "exploit vulnerabilities in ways that far outpace the efforts of defenders."

Its Significance: It confirms that a new class of AI is coming, one that the company building it is openly nervous about releasing. Anthropic's plan is to give cyber defense teams early access so they can harden their systems before the model reaches the open market. That's a rare move. Most AI companies race to ship. The fact that Anthropic is slowing down here signals this model might be genuinely different from anything currently available. For everyday people, the most direct impact comes through jobs and security. A model that dramatically improves coding could speed up automation in tech and writing roles. Whether that shift helps or hurts depends entirely on who gets to use it first, and just as important, how much they plan to charge consumers to use it.

QUICK TAKES

The story: Controversy erupted this week over a "Modern Love" essay published in the New York Times in November 2025, after a reader flagged it on social media as AI-generated. An analysis by The Atlantic then found evidence suggesting major publications have been running AI-written content, sometimes without knowing it. The NYT has not confirmed whether the piece was AI-written.

Your takeaway: If major newspapers can't always tell the difference between human and AI writing, readers have almost no chance. This is pushing publishers to think harder about disclosure policies and detection tools, both of which are still a long way from ready.

The story: Nearly 200 protesters with a group called Stop the AI Race marched from Anthropic's San Francisco headquarters to OpenAI and xAI last weekend, demanding all three CEOs publicly commit to pausing frontier AI development if other labs agree to do the same. The protest followed Anthropic quietly dropping a key safety pledge in February that had committed the company to pausing AI training if safety conditions weren't met.

Your takeaway: Public pressure on AI labs is growing louder and more organized. Whether or not these protests change company behavior, they show that a growing number of researchers and former tech workers believe the pace of AI development has become genuinely dangerous.

The story: A study published in the journal Science tested 11 major AI chatbots, including ChatGPT, Claude, Gemini, and Meta's Llama, and found all of them show "sycophancy," meaning they agree with users and validate bad decisions rather than giving honest answers. Researchers at Stanford found that people actually trust sycophantic AI more, even when it leads them to worse choices.

Your takeaway: Your AI chatbot is built to make you feel good, not to tell you the truth. The fix isn't simple either. Researchers say companies may need to retrain their models from scratch, because this agreeable behavior is baked in deep.

TOOLS ON OUR RADAR

📖 whoami.wiki Free and Open Source: A brilliant personal encyclopedia that uses artificial intelligence agents to document your family history and personal stories by organizing your loose photographs and memories into a private Wikipedia. (Alternative to Ancestry)

🌳 KnowTree Freemium: A unique conversation mapping tool that transforms your flat chat history into branching navigable trees allowing you to visually track and compare different paths of thought across multiple models simultaneously.

📄 Storydoc Freemium: An interactive business document creator that uses artificial intelligence to transform your static decks and reports into engaging web based experiences complete with embedded forms and real time engagement analytics.

🎓 OpenMAIC Free and Open Source: An innovative educational platform that transforms any document or topic into a full multimedia classroom experience with artificial intelligence teachers and interactive classmates.

TRENDING

Meta's Big Court Defeat Has Huge Implications for Lawsuits Against the AI Industry - Meta and YouTube lost a landmark social media addiction trial, with a jury finding they caused a young woman life-altering mental health harm. Legal observers say the precedent could ripple into AI liability cases, since AI companies face similar arguments that their products are designed to keep people engaged at the cost of their wellbeing.

New Report Says AI Could Cost Nearly 10 Million Americans Their Jobs - Tufts University's Digital Planet lab released the first American AI Jobs Risk Index, projecting that between 2.7 million and 19.5 million U.S. jobs could be displaced by AI in the next two to five years, with the most vulnerable workers in writing, coding, and web design. The cities most invested in building AI, including San Francisco and Boston, also face the highest displacement risk.

When AI Agent Teams Are Left to Work Together, Chaos Follows - A two-week study by 38 researchers from Northeastern, Harvard, and Carnegie Mellon let six autonomous AI agents loose in a real working environment with email access and file systems. The agents failed 10 out of 16 safety tests, in some cases leaking private documents including Social Security and bank account numbers, while in one case an agent destroyed its own email server to protect a secret.

Federal Judge Temporarily Blocks the Pentagon from Branding AI Firm Anthropic a Supply Chain Risk - U.S. District Judge Rita Lin issued a preliminary injunction Thursday blocking the Trump administration's designation of Anthropic as a national security supply chain risk, calling the label "Orwellian" and ruling it appeared designed to punish the company for publicly criticizing the government's contracting position. The order is paused for seven days to allow an appeal.

How Author Bill Johns Used AI to Produce 375 Books - A 70-year-old retired cybersecurity consultant in Maryland has used AI to publish 375 books on Amazon in roughly a year, covering everything from baseball managers to bourbon to nuclear power. His story came to light after a Pittsburgh author suspected Johns was a fictional AI-generated persona, but Johns turned out to be very real and unapologetic about how he works.

Agile Robots and Google DeepMind Are Bringing AI Brains to Industrial Humanoid Robots - German robotics company Agile Robots, which already has more than 20,000 robots deployed worldwide, announced a partnership with Google DeepMind to install its Gemini Robotics AI models into Agile's humanoid and industrial robot hardware. The companies plan to start with manufacturing and automotive tasks, using real-world data from each deployment to improve the AI models over time.

TRY THIS PROMPT (copy and paste into Claude, ChatGPT, or Gemini)

💅 Build a wikipedia page that is a simplified version of one of today’s Tools on Our Radar

Build a personal Wikipedia page app. Features: editable page title and infobox (Born, Nationality, Education, Occupation, Known for, Website), photo upload with caption, auto-updating table of contents, editable sections (Biography, Career, Personal life, Achievements, References), global edit mode that highlights all fields in yellow when active, add/remove sections via a modal, add category tags, save with bottom toolbar or Cmd+S, last-edited timestamp updates on save, sidebar navigation, sticky header with Article/Edit/History tabs. Authentic Wikipedia visual style using Linux Libertine and Linux Biolinum fonts, Wikipedia's exact grays and blues, infobox floated right, TOC floated left. Fully self-contained HTML file, no frameworks.What this does:

Creates personal Wikipedia page builder that lets you write and edit your own encyclopedia-style article about yourself — complete with an infobox, photo, biography sections, and a table of contents — all styled to look exactly like the real Wikipedia.

What this looks like:

WHERE WE STAND(based on today’s news)

✅ AI Can Now: Help build models so capable at finding security vulnerabilities that the company making them is scared to release them publicly.

❌ Still Can't: Tell users the truth without being sycophantic, according to a peer-reviewed study of 11 major AI systems.

✅ AI Can Now: Power industrial humanoid robots that get smarter with every real-world task they complete.

❌ Still Can't: Operate safely in teams without leaking private data, looping endlessly, or ignoring the instructions it was given.

RECOMMENDED LISTENING/READING/WATCHING

Bell Labs produced the transistor, the laser, information theory, Unix, and a string of other inventions that quietly built the modern world, and this is the story of how one research facility managed to do all of it. Jon Gertner follows the scientists and engineers who worked there, including Claude Shannon, whose information theory underpins everything from your phone signal to modern AI. It's less a biography than a portrait of what happens when you give brilliant people time, resources, and no pressure to ship a product. A good reminder that a lot of what we call "new" in tech has very deep roots.

Attio is the AI CRM for modern teams.

Connect your email and calendar and Attio instantly builds your CRM. Every contact, every company, every conversation — organized in one place. Then ask it anything. No more digging, no more data entry. Just answers.

Thank you for reading. We’re all beginners in something. With that in mind, your questions and feedback are always welcome and I read every single email!

-James

By the way, this is the link if you liked the content and want to share with a friend.

Some * designated product links may be affiliate or referral links. As an Amazon Associate, I earn from qualifying purchases. This helps support the newsletter at no extra cost to you and Amazon makes a tiny hair less.